This is a chapter-by-chapter collection of notes and quotes for the book Accelerate: The Science of Lean Software and DevOps: Building and Scaling High Performing Technology Organizations, Nicole Forsgren PhD, Jez Humble, Gene Kim.

Any quotes from the book in this article are copyrighted. My intent is to provide an overview of what struck me in the material, and to encourage any visitor to purchase and read this important work.

History

- 2018-10-14 Chapters 1-5 released

- 2018-11-05 Chapters 6-7 added

- 2018-11-06 Chapter 8 added

- 2018-11-17 Chapters 9-10 added

- 2018-12-12 Chapter 11 added, ending Part 1

- 2019-01-05 Part 1 notes and quotes cleaned up. Added recommended actions.

- 2019-01-22 Parts 2 and 3 (chapters 12-16) added. Project finished.

- My Top-Level Recommended Actions

- Quick Reference: Capabilities to Drive Improvement

- Preface

- Part 1: What We Found

- Chapter 1 - Accelerate

- Chapter 2 - Measuring Performance

- Chapter 3 - Measuring and Changing Culture

- Chapter 4 - Technical Practices

- Chapter 5 - Architecture

- Chapter 6 - Integrating InfoSec Into the Delivery Lifecyle

- Chapter 7 - Management Practices for Software

- Chapter 8 - Product Development

- Chapter 9 - Making Work Sustainable

- Chapter 10 - Employee Satisfaction, Identity, and Engagement

- Chapter 11 - Leaders and Managers

- Part 2 - The Research

- Part 3 - Transformation

My Top-Level Recommended Actions

Where would I start implementing DevOps in an organization? These are my current opinions, based on the material.

- Build Continuous Delivery. Putting CD in place will affect the entire department, code base, and ultimately the organization's culture because of faster, more certain releases. It will reveal architectural, versioning and hardware environment problems, and (should) force monitoring what matters.

- Increase Staff Diversity and Inclusivity. The research strongly supports this. A more diverse and inclusive environment--when well-supported--leads to higher performance and business return.

- Foster a Generative Culture. An organizational culture that values cooperation, experimentation, risk-to-failure, and mission-focus will thrive. This can be driven from the bottom-up, but still requires management/group support.

Quick Reference: Capabilities to Drive Improvement

Our research has uncovered 24 key capabilities that drive improvements in software delivery performance. This reference will point you to them in the book.

The capabilities are classified into five categories:

- Continuous delivery

- Architecture

- Product and process

- Lean management and monitoring

- Cultural

CONTINUOUS DELIVERY CAPABILITIES

- Version control: Chapter 4

- Deployment automation: Chapter 4

- Continuous integration: Chapter 4

- Trunk-based development: Chapter 4

- Test automation: Chapter 4

- Test data management: Chapter 4

- Shift left on security: Chapter 6

- Continuous delivery (CD): Chapter 4

ARCHITECTURE CAPABILITIES

9. Loosely coupled architecture: Chapter 5

10. Empowered teams: Chapter 5PRODUCT AND PROCESS CAPABILITIES

11. Customer feedback: Chapter 8

12. Value stream: Chapter 8

13. Working in small batches: Chapter 8

14. Team experimentation: Chapter 8LEAN MANAGEMENT AND MONITORING CAPABILITIES

15. Change approval processes: Chapter 7

16. Monitoring: Chapter 7

17. Proactive notification: Chapter 13

18. WIP limits: Chapter 7

19. Visualizing work: Chapter 7CULTURAL CAPABILITIES

20. Westrum organizational culture: Chapter 3

21. Supporting learning: Chapter 10

22. Collaboration among teams: Chapters 3 and 5

23. Job satisfaction: Chapter 10

24. Transformational leadership: Chapter 11

Preface

Notes

Accelerate is the product of four years of serious, peer-reviewed research. It isn't guesswork or opinions from the field. It's validated.

The book is divided into three parts: the research results, the methodology, and a case study.

Quotes

Beginning in late 2013, we embarked on a four-year research journey to investigate what capabilities and practices are important to accelerate the development and delivery of software and, in turn, value to companies.

Part 1: What We Found

Chapter 1 - Accelerate

Actionable Takeaways

- Use smaller teams and accurately support them at an organizational level using DevOps principles.

Notes

None of these factors predicts performance:

- age and technology used for the application (for example, mainframe “systems of record” vs. greenfield “systems of engagement”)

- whether operations teams or development teams performed deployments

- whether a change approval board (CAB) is implemented

Maturity models don't work. Capability models do.

Companies, even big ones, are moving away from big projects, instead using small teams and development cycles.

Quotes

To summarize, in 2017 we found that, when compared to low performers, the high performers have:

- 46 times more frequent code deployments

- 440 times faster lead time from commit to deploy

- 170 times faster mean time to recover from downtime

- 5 times lower change failure rate (1/5 as likely for a change to fail)

DevOps emerged from a small number of organizations facing a wicked problem: how to build secure, resilient, rapidly evolving distributed systems at scale.

A recent Forrester (Stroud et al. 2017) report found that 31% of the industry is not using practices and principles that are widely considered to be necessary for accelerating technology transformations, such as continuous integration and continuous delivery, Lean practices, and a collaborative culture (i.e., capabilities championed by the DevOps movement).

Another Forrester report states that DevOps is accelerating technology, but that organizations often overestimate their progress (Klavens et al. 2017). Furthermore, the report points out that executives are especially prone to overestimating their progress when compared to those who are actually doing the work.

Chapter 2 - Measuring Performance

Actionable Takeaways

- Measure software performance using delivery lead time, deployment frequency, time to restore service, and change fail rate.

- Keep development of strategic software in-house.

Notes

The academic rigor of this book is exceptionally high.

It's important to distinguish between strategic and non-strategic software. Strategic software should be kept in-house. See Simon Wardley and the Wardley mapping method. (I think understanding and using Wardley's mapping techniques will take some time. They look valuable, but there may not be a quick answer on strategic vs non-strategic software.)

The stats on "the impact of delivery performance on organization performance" shows that software development and IT are not cost centers; they can provide a competitive advantage.

Two characteristics of successful measures: focus on global outcomes, and focus on outcomes not output.

Lead Time is "the time it takes to go from a customer making a request to the request being satisfied." The authors focused on the delivery part of lead time--"the time it takes for work to be implemented, tested, and delivered."

Quotes

There are many frameworks and methodologies that aim to improve the way we build software products and services. We wanted to discover what works and what doesn’t in a scientific way,...

In our search for measures of delivery performance,... we settled on four: delivery lead time, deployment frequency, time to restore service, and change fail rate.

Astonishingly, these results demonstrate that there is no tradeoff between improving performance and achieving higher levels of stability and quality. Rather, high performers do better at all of these measures. This is precisely what the Agile and Lean movements predict,...

It’s worth noting that the ability to take an experimental approach to product development is highly correlated with the technical practices that contribute to continuous delivery.

The fact that software delivery performance matters provides a strong argument against outsourcing the development of software that is strategic to your business, and instead bringing this capability into the core of your organization.

The measurement tools can be used by any organization,...

However, it is essential to use these tools carefully. In organizations with a learning culture, they are incredibly powerful. But “in pathological and bureaucratic organizational cultures, measurement is used as a form of control, and people hide information that challenges existing rules, strategies, and power structures. As Deming said, 'whenever there is fear, you get the wrong numbers'”

Chapter 3 - Measuring and Changing Culture

Actionable Takeaways

- Aim for a Generative culture.

- Increase information flow.

Notes

Ron Westrum's three characteristics of good information:

- Provides the needed answers

- Timely

- Presented so can be used effectively

A good culture:

- Requires trust and cooperation

- Has higher quality decisionmaking

- Teams do better job with their people

Quotes

Ron Westrum's three kinds of organizational culture

| Pathological | (power-oriented) organizations are characterized by large amounts of fear and threat. People often hoard information or withhold it for political reasons, or distort it to make themselves look better. |

| Bureaucratic | (rule-oriented) organizations protect departments. Those in the department want to maintain their “turf,” insist on their own rules, and generally do things by the book — their book. |

| Generative | (performance-oriented) organizations focus on the mission. How do we accomplish our goal? Everything is subordinated to good performance, to doing what we are supposed to do. |

Table 3.1 Westrum's Typology of Organizational Culture.

| Pathological (Power-Oriented) | Bureaucratic (Rule-Oriented) | Generative (Performance-Oriented) |

|---|---|---|

| Low cooperation | Modest cooperation | High cooperation |

| Messengers “shot” | Messengers neglected | Messengers trained |

| Responsibilities shirked | Narrow responsibilities | Risks are shared |

| Bridging discouraged | Bridging tolerated | Bridging encouraged |

| Failure leads to scapegoating | Failure leads to justice | Failure leads to inquiry |

| Novelty crushed | Novelty leads to problems | Novelty implemented |

Westrum’s theory posits that organizations with better information flow function more effectively.

Thus, accident investigations that stop at “human error” are not just bad but dangerous. Human error should, instead, be the start of the investigation.

Our research shows that Lean management, along with a set of other technical practices known collectively as continuous delivery (Humble and Farley 2010), do in fact impact culture,...

Using the Likert-scale questionnaire

To calculate the “score” for each survey response, take the numerical value (1-7) corresponding to the answer to each question and calculate the mean across all questions. Then you can perform statistical analysis on the responses as a whole.

Chapter 4 - Technical Practices

Actionable Takeaways

- Implement Continuous Delivery. It brings a fundamental change in development and culture.

Notes

Keeping system and application configuration in version control is more important to delivery performance than keeping code in version control. (But both are important).

Continuous Delivery practices will help improve culture. However, implementing the practices "often requires rethinking everything".

Quotes

A key goal of continuous delivery is changing the economics of the software delivery process so the cost of pushing out individual changes is very low.

Implementing continuous delivery means creating multiple feedback loops to ensure that high-quality software gets delivered to users more frequently and more reliably.

Continuous delivery is a set of capabilities that enable us to get changes of all kinds — features, configuration changes, bug fixes, experiments — into production or into the hands of users safely, quickly, and sustainably. There are five key principles at the heart of continuous delivery:

- Build Quality In

Invest in building a culture supported by tools and people where we can detect any issues quickly, so that they can be fixed straight away when they are cheap to detect and resolve.- Work in Small Batches

By splitting work up into much smaller chunks that deliver measurable business outcomes quickly for a small part of our target market, we get essential feedback on the work we are doing so that we can course correct.- Computers perform repetitive tasks; people solve problems

One important strategy to reduce the cost of pushing out changes is to take repetitive work that takes a long time, such as regression testing and software deployments, and invest in simplifying and automating this work.- Relentlessly pursue continuous improvement

The most important characteristic of high-performing teams is that they are never satisfied: they always strive to get better.- Everyone is responsible

in bureaucratic organizations teams tend to focus on departmental goals rather than organizational goals. Thus, development focuses on throughput, testing on quality, and operations on stability. However, in reality these are all system-level outcomes, and they can only be achieved by close collaboration between everyone involved in the software delivery process. A key objective for management is making the state of these system-level outcomes transparent, working with the rest of the organization to set measurable, achievable, time-bound goals for these outcomes, and then helping their teams work toward them.

In order to implement continuous delivery, we must create the following foundations:

- Comprehensive configuration management

It should be possible to provision our environments and build, test, and deploy our software in a fully automated fashion purely from information stored in version control.- Continuous integration

High-performing teams keep branches short-lived (less than one day’s work) and integrate them into trunk/master frequently. Each change triggers a build process that includes running unit tests. If any part of this process fails, developers fix it immediately.- Continuous testing

Automated unit and acceptance tests should be run against every commit to version control to give developers fast feedback on their changes. Developers should be able to run all automated tests on their workstations in order to triage and fix defects.

Chapter 5 - Architecture

Actionable Takeaways

- Invest lots of time in a loosely-coupled, testable architecture.

- Let teams choose their tools (except security tools, which should be approved and readily available).

Notes

See the quote below, it's important that teams be loosely coupled, not just the architecture. But how does this apply to a small software/IT shop of, say a half dozen employees? I see autonomy of teams coming up quite a bit, not having to ask for permission to change from someone outside the team, and also that changes don't strongly affect other teams. This is a result of loose-coupling.

The team has to be vigilant about ensuring the ability to independently test services.

A good, loosely-coupled architecture allows not just the software but the teams to scale. Contrary to assumptions, adding employees when there's a proper software/team architecture leads to increasing deployment frequency.

Quotes

We found that high performance is possible with all kinds of systems, provided that systems—and the teams that build and maintain them—are loosely coupled.

We discovered that low performers were more likely to say that the software they were building—or the set of services they had to interact with—was custom software developed by another company (e.g., an outsourcing partner).... In the rest of the cases, there was no significant correlation between system type and delivery performance. We found this surprising: we had expected teams working on packaged software, systems of record, or embedded systems to perform worse, and teams working on systems of engagement and greenfield systems to perform better. The data shows that this is not the case.

This reinforces the importance of focusing on the architectural characteristics, discussed below, rather than the implementation details of your architecture.

Those who agreed with the following statements were more likely to be in the high-performing group:

- We can do most of our testing without requiring an integrated environment.

- We can and do deploy or release our application independently of other applications / services it depends on.

When teams can decide which tools they use, it contributes to software delivery performance and, in turn, to organizational performance.

That said, there is a place for standardization, particularly around the architecture and configuration of infrastructure.

Another finding in our research is that teams that build security into their work also do better at continuous delivery. A key element of this is ensuring that information security teams make preapproved, easy-to-consume libraries, packages, toolchains, and processes available for developers and IT operations to use in their work.

Architects should focus on engineers and outcomes, not tools or technologies.... What tools or technologies you use is irrelevant if the people who must use them hate using them, or if they don’t achieve the outcomes and enable the behaviors we care about. What is important is enabling teams to make changes to their products or services without depending on other teams or systems.

Chapter 6 - Integrating InfoSec Into the Delivery Lifecyle

Actionable Takeaways

- Move security to the beginning of--and throughout--development.

Notes

InfoSec is everyone's responsiblity.

Don't leave security reviews until the end of development. Security is equal to Development and Operations.

Quotes

Many developers are ignorant of common security risks, such as the OWASP Top 10.

What does "shifting left" [on security] mean?

- First, security reviews are conducted for all major features, and this review process is performed in such a way that it doesn’t slow down the development process.

- The second aspect of this capability: information security should be integrated into the entire software delivery lifecycle from development through operations.

- Finally, we want to make it easy for developers to do the right thing when it comes to infosec.

We found that high performers were spending 50% less time remediating security issues than low performers.

Rugged DevOps is the combination of DevOps with the Rugged Manifesto.

Chapter 7 - Management Practices for Software

Actionable Takeaways

- Use a kanban (or similar visual) board.

- Get rid of external change approval.

Notes

Lean management, as applied to software, currently yields better results that other practices.

Four components of Lean management applied to software:

- Limit Work in Progress (WIP)

- Visual Management

- Feedback from Production

- Lightweight Change Approvals

Quotes

Lean management components modeled by the authors:

- Limiting work in progress (WIP), and using these limits to drive process improvement and increase throughput

- Creating and maintaining visual displays showing key quality and productivity metrics and the current status of work (including defects), making these visual displays available to both engineers and leaders, and aligning these metrics with operational goals

- Using data from application performance and infrastructure monitoring tools to make business decisions on a daily basis

WIP limits on their own do not strongly predict delivery performance. It’s only when they’re combined with the use of visual displays and have a feedback loop from production monitoring tools back to delivery teams or the business that we see a strong effect.

We found that approval only for high-risk changes was not correlated with software delivery performance. Teams that reported no approval process or used peer review achieved higher software delivery performance. Finally, teams that required approval by an external body achieved lower performance.

We found that external approvals were negatively correlated with lead time, deployment frequency, and restore time, and had no correlation with change fail rate. In short, approval by an external body (such as a manager or CAB) simply doesn’t work to increase the stability

Chapter 8 - Product Development

Actionable Takeaways

- My suggestion to start with: Make Flow of Work Visible (a kanban board will help)

Notes

Lean Product Development

- Work in Small Batches

- Make Flow of Work Visible

- Gather and Implement Customer Feedback

- Team Experimentation

Quotes

The Agile brand has more or less won the methodology wars. However, much of what has been implemented is faux Agile—people following some of the common practices while failing to address wider organizational culture and processes.

We wanted to test whether these [Lean] practices have a direct impact on organizational performance, measured in terms of productivity, market share, and profitability.

- The extent to which teams slice up products and features into small batches that can be completed in less than a week and released frequently, including the use of MVPs (minimum viable products).

- Whether teams have a good understanding of the flow of work from the business all the way through to customers, and whether they have visibility into this flow, including the status of products and features.

- Whether organizations actively and regularly seek customer feedback and incorporate this feedback into the design of their products.

- Whether development teams have the authority to create and change specifications as part of the development process without requiring approval.

It’s worth noting that an experimental approach to product development is highly correlated with the technical practices that contribute to continuous delivery.

Chapter 9 - Making Work Sustainable

Actionable Takeaways

- Reduce deployment pain. I suggest starting with automated reproduciion of production state (to work with Continuous Deployment).

- Make sure the supposed organization values align with the actual, lived values.

Notes Huh. No notes on this chapter.

Quotes

The fear and anxiety that engineers and technical staff feel when they push code into production can tell us a lot about a team’s software delivery performance.

We call this deployment pain, and it is important to measure because it highlights the friction and disconnect that exist between the activities used to develop and test software and the work done to maintain and keep software operational. This is where development meets IT operations, and it is where there is the greatest potential for differences: in environment, in process and methodology, in mindset, and even in the words teams use to describe the work they do.

Where code deployments are most painful, you’ll find the poorest software delivery performance, organizational performance, and culture. [emphasis mine]

In order to reduce deployment pain, we should:

- Build systems that are designed to be deployed easily into multiple environments, can detect and tolerate failures in their environments, and can have various components of the system updated independently

- Ensure that the state of production systems can be reproduced (with the exception of production data) in an automated fashion from information in version control

- Build intelligence into the application and the platform so that the deployment process can be as simple as possible

Burnout is physical, mental, or emotional exhaustion caused by overwork or stress — but it is more than just being overworked or stressed. Burnout can make the things we once loved about our work and life seem insignificant and dull. It often manifests itself as a feeling of helplessness, and is correlated with pathological cultures and unproductive, wasteful work.

Job stress also affects employers, costing the US economy $ 300 billion per year in sick time, long-term disability, and excessive job turnover (Maslach 2014). Thus, employers have both a duty of care toward employees and a fiduciary obligation to ensure staff do not become burned out.

Our own research tells us which organizational factors are most strongly correlated with high levels of burnout, and suggests where to look for solutions. The five most highly correlated factors are:

- Organizational culture. culture. Strong feelings of burnout are found in organizations with a pathological, power-oriented culture.

- Deployment pain. Complex, painful deployments that must be performed outside of business hours contribute to high stress and feelings of lack of control.

- Effectiveness of leaders. Responsibilities of a team leader include limiting work in process and eliminating roadblocks for the team so they can get their work done.

- Organization investments in DevOps. Organizations that invest in developing the skills and capabilities of their teams get better outcomes.

- Organizational performance. Our data shows that Lean management and continuous delivery practices help improve software delivery performance, which in turn improves organizational performance.

It is important to note that the organizational values we mention here are the real, actual, lived organizational values felt by employees. If the organizational values felt by employees differ from the official values of the organization — the mission statements printed on pieces of paper or even on placards — it will be the everyday, lived values that count. If there is a values mismatch — either between an employee and their organization, or between the organization’s stated values and their actual values — burnout will be a concern. When there is alignment, employees will thrive.

Chapter 10 - Employee Satisfaction, Identity, and Engagement

Actionable Takeaways

- Increase a culture of experimentation and learning.

- Increase staff diversity and inclusivity.

Notes

No notes again. The quotes tell the tale.

Quotes

We found that employees in high-performing teams were 2.2 times more likely to recommend their organization to a friend as a great place to work, and 1.8 times more likely to recommend their team to a friend. This is a significant finding, as research has shown that “companies with highly engaged workers grew revenues two and a half times as much as those with low engagement levels.

To measure [identification with the organization], we asked people the extent to which they agreed with the following statements (adapted from Kankanhalli et al. 2005):

- I am glad I chose to work for this organization rather than another company.

- I talk of this organization to my friends as a great company to work for.

- I am willing to put in a great deal of effort beyond what is normally expected to help my organization be successful.

- I find that my values and my organization’s values are very similar.

- In general, the people employed by my organization are working toward the same goal.

- I feel that my organization cares about me.

Our analysis is clear: in today’s fast-moving and competitive world, the best thing you can do for your products, your company, and your people is institute a culture of experimentation and learning, and invest in the technical and management capabilities that enable it.

Automation matters because it gives over to computers the things computers are good at — rote tasks that require no thinking.... Being able to apply one’s judgment and experience to challenging problems is a big part of what makes people satisfied with their work.

Diversity matters. Research shows that teams with more diversity with regard to gender or underrepresented minorities are smarter (Rock and Grant 2016), achieve better team performance (Deloitte 2013), and achieve better business outcomes (Hunt et al. 2015).

It is also important to note that diversity is not enough. Teams and organizations must also be inclusive. An inclusive organization is one where “all organizational members feel welcome and valued for who they are and what they ’ bring to the table. ’ All stakeholders share a high sense of belonging and fulfilled mutual purpose” (Smith and Lindsay 2014, p. 1). Inclusion must be present in order for diversity to take hold.

A study conducted by Anita Woolley and Thomas W. Malone measured group intelligence and found that teams with more women tended to fall above average on the collective intelligence scale (Woolley and Malone 2011). Despite all of these clear advantages, organizations are failing to recruit and retain women in technical fields.

Women and underrepresented minorities report harassment, microaggressions, and unequal pay (e.g., Mundy 2017). These are all things we can actively watch for and improve as leaders and peers.

Chapter 11 - Leaders and Managers

Actionable Takeaways

- Hire or train leader-managers who build a learning culture.

- Again, let developers choose their tools.

Notes

- We [developers] don't like managers who get in the way, but we recognize that good leaders make a positive difference toward success.

Five Characteristics of a transformational leader:

- Vision. Has a clear understanding of where the organization is going and where it should be in five years.

- Inspirational communication. Communicates in a way that inspires and motivates, even in an uncertain or changing environment.

- Intellectual stimulation. Challenges followers to think about problems in new ways.

- Supportive leadership. Demonstrates care and consideration of followers’ personal needs and feelings.

- Personal recognition. Praises and acknowledges achievement of goals and improvements in work quality; personally compliments others when they do outstanding work.

Transformative leadership helps, but it isn't enough:

They need their teams executing the work on a suitable architecture, with good technical practices, use of Lean principles, and all the other factors that we’ve studied over the years.

It's interesting that leaders don't have to be managers, and vice-versa. When managers are leaders, that's very powerful.

Managers should make performance metrics visible and aligned with organizational goals.

Prioritize working on technical debt.

Tips:

Enable cross-functional collaborations by:

- Building trust with yoru counterparts on other teams.

- Encouraging pracitioners to move between departments.

- Actively seeking, encouraing, and rewarding work that facilitates collaboration.

Help create a climate of learning by:

- Creating a training budget and advocating for it internally.

- Ensuring that your team has the resources to engage in informal learning and the space to explore ideas.

- Making it safe to fail.

- Creating opportunities and spaces to share information.

- Encourage sharing and innovation by having demo days and forums.

Make effective use of tools:

- Make sure your team can choose their tools.

If your organization must standardize tools, ensure that procurement and finance are acting in the interests of teams, not the other way around.

- Make monitoring a priority.

Quotes

A good leader affects a team’s ability to deliver code, architect good systems, and apply Lean principles to how the team manages its work and develops products. All of these have a measurable impact on an organization’s profitability, productivity, and market share.

Servant leaders focus on their followers’ development and performance, whereas transformational leaders focus on getting followers to identify with the organization and engage in support of organizational objectives.

Though we often hear stories of DevOps and technology transformation success coming from the grassroots, it is far easier to achieve success when you have leadership support.

Our research shows that three things are highly correlated with software delivery performance and contribute to a strong team culture: cross-functional collaboration, a climate for learning, and tools.

Part 2 - The Research

Chapter 12 - The Science Behind This Book

Actionable Takeaways

- When creating your own surveys, use a Likert-like Scale

Notes

- Primary Research

- Data collected by the team

- Secondary Research

- Data collected by someone else

This book is based on primary research.

- Qualitative Research

- Data isn't in numerical form, e.g. interviews, blog posts, long-form log data

- Quantitative Research

- Data includes numbers, e.g. stock, survey[if numerical]).

This book's research is quantitative.

Likert-Type Scale

A Likert-type scale records responses and assigns them a number value. For example, “Strongly disagree” would be given a value of 1, neutral a value of 4, and “Strongly agree” a value of 7. This provides a consistent approach to measurement across all research subjects, and provides a numerical base for researchers to use in their analysis.

TYPES OF STATISTICAL ANALYSIS

- Descriptive (data is summarized and reported, e.g. a census)

- Exploratory (looks for relationships among the data. Includes correlation, but not causation)

- Inferential predictive (hypothesis-driven, but using "field data" because lab conditions aren't practical)

- Predictive (used to forecast future events based on previous events)

- Causal (generally requires randomized studies to determine cause and effect)

- Mechanistic (calculate exact changes to make to variables to cause exact behaviors that will be observed under certain conditions)

The analyses in the book fall into the first three categories. An additional type used as "classification."

- Classification Analysis

- Classification variables are entered into the clustering algorithm and significant groups are identified.

- Population

- "The entire group of something you are interested in researching."

- Sample

- "A portion of the population that is carefully defined and selected."

Quotes

In our research, we applied [classification] statistical method using the tempo and stability variables to help us understand and identify if there were differences in how teams were developing and delivering software, and what those differences looked like.

The research presented in this book covers a four-year time period, and was conducted by the authors. Because it is primary research, it is uniquely suited to address the research questions we had in mind—specifically, what capabilities drive software delivery performance and organizational performance? This project was based on quantitative survey data, allowing us to do statistical analyses to test our hypotheses and uncover insights into the factors that drive software delivery performance.

Chapter 13 - Introduction to Psychometrics

Actionable Takeaways

- Push polls are not a valid, unbiased type of survey

- Writing excellent surveys is difficult and requires training

- The Net Promoter Score (NPS) is an example of a good quick survey (a survey with one question).

Notes

This chapter should have been called, "Why Do We Trust Our Data?"

- Latent Constructs

- A set of characteristics (manifest variables) about something that can't be measured directly, e.g. corporate culture. A clear definition and careful questions about the characteristics can indirectly measure the item.

The team were very careful to validate that the data is correct, using several statistical methods. In short, they prove the data is telling them what they think it's telling them.

Validity and reliability tests come before analysis.

Quotes

The two most common questions we get about our research are why we use surveys in our research (a question we will address in detail in the next chapter) and if we are sure we can trust data collected with surveys (as opposed to data that is systemgenerated). This is often fueled by doubts about the quality of our underlying data—and therefore, the trustworthiness of our results.

Skepticism about good data is valid, so let’s start here: How much can you trust data that comes from a survey? Much of this concern comes from the types of surveys that many of us are exposed to: push polls (also known as propaganda surveys), quick surveys, and surveys written by those without proper research training.

Push polls are those with a clear and obvious agenda—their questions are difficult to answer honestly unless you already agree with the “researcher’s” point of view.

- Latent constructs help us think carefully about what we want to measure and how we define our constructs.

- They give us several views into the behavior and performance of the system we are observing, helping us eliminate rogue data.

- They make it more difficult for a single bad data source (whether through misunderstanding or a bad actor) to skew our results.

Chapter 14 - Why Use a Survey

Actionable Takeaways

- Surveys are often an excellent way to gather and measure data.

Notes

The researchers sometimes had to explain why surveys could be trusted, and used three questions:

- Do you trust survey data? (often answered "no")

- Do you trust your system or log data? (often answered "yes")

- Have you ever seen bad data come from your system? (um...yes)

The points of the third question are that software and system data come from humans. Humans make mistakes. They also can make intentional errors, for example a user with root access changing data in log files. This is pretty bad, since system data affected by a bad actor can remain unnoticed for months, years, or never discovered. But if a few people lie on surveys, that won't significanty change the data. And coordinated lying would be difficult to accomplish. [Note: especially for this research, which uses surveys across companies.]

Quotes

Teams wanting to understand the performance of their software delivery process often begin by instrumenting their delivery process and toolchain to obtain data (we call data gathered in this way “system data” throughout this book).

There are several reasons to use survey data. We’ll briefly present some of these in this chapter.

Surveys allow you to collect and analyze data quickly.

Measuring the full stack with system data is difficult.

"Without asking people about the performance of the system, the team would not have understood what was going on. Taking time to do periodic assessments that include the perceptions of the technologists that make and deliver your technology can uncover key insights into the bottlenecks and constraints in your system."

Measuring completely with system data is difficult.

People won’t have perfect knowledge or visibility into systems either--but if you ignore the perceptions and experience of the professionals working on your systems entirely, you lose an important view into your systems.

- You can trust survey data.

- Some things can only be measured through surveys.

But if you want to know how they feel about the work environment and how supportive it is to their work and their goals—if you want to know why they’re behaving in the way you observe—you have to ask them. And the best way to do that in a systematic, reliable way that can be compared over time is through surveys.

And it is worth asking. Research has shown that organizational culture is predictive of technology and organizational performance, is predictive of performance outcomes, and that team dynamics and psychological safety are the most important aspects in understanding team performance

Chapter 15 - The Data for the Project

Actionable Takeaways

Notes

The population was representative.

Quotes

We did not collect data from professionals and organizations who were not familiar with things like configuration management, infrastructure-as-code, and continuous integration. By not collecting data on this group, we miss a cohort that are likely performing even worse than our low performers.

Special care was also taken to send invitations to groups that included underrepresented groups and minorities in technology.

To expand our reach into the technologists and organizations developing and delivering software, we also invited referrals. This aspect of growing our initial sample is called referral sampling or snowball sampling.... Snowball sampling was an appropriate data collection method for this study for several reasons:

- Identifying the population of those who make software using DevOps methodologies is difficult or impossible.

- The population is typically and traditionally averse to being studied.

Part 3 - Transformation

Chapter 16 - High-Performance Leadership and Management

Actionable Takeaways

- Understand why, don't just copy behaviors

Notes

This chapter is a review of actual changes put in place by development management at "at ING Netherlands, a global bank with over 34.4 million customers worldwide and with 52,000 employees, including more than 9,000 engineers,..."

The chapter examines a "high-performing management framework in practice."

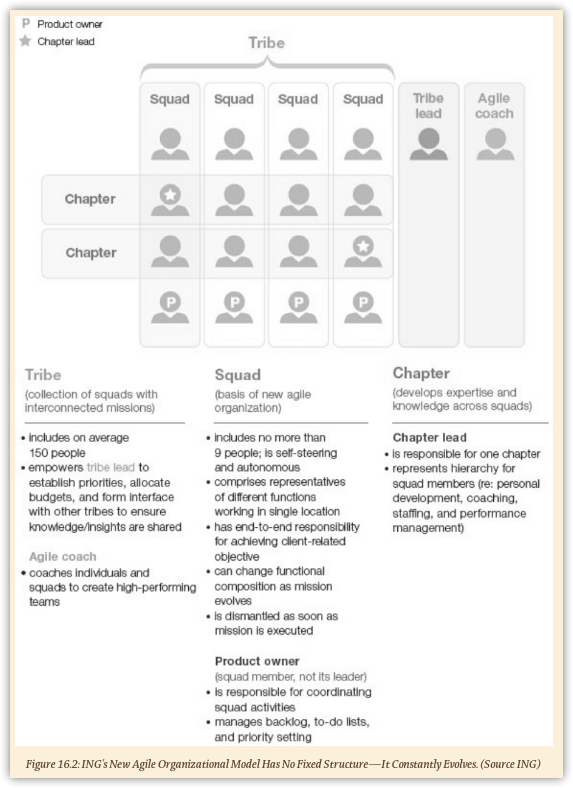

Structure at ING:

- A Tribe is a collection of squads with interconnected missions

- A Squad is a group of nine or fewer people. It is self-steering, autonomous, end-to-end responsible for delivering a client-related objective, and dismantled as soon as the mission is executed.

- The Product Owner coordinates squad activities, manages backlog. Is a member, not a leader.

- A Chapter develops expertise and knowledge across squads

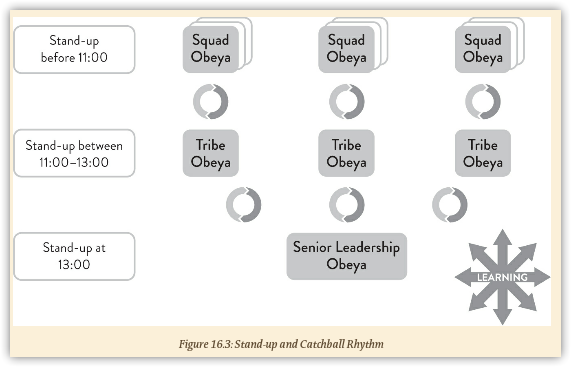

- Obeya

- Japanese for war room

Even if squads had differences, such as different kanban board columns, there was commonality so that key metrics could be clearly seen and understood by anyone entering the obeya.

Meetings: This structure leads to important information being relayed and acted upon across squads.

Quotes

In addition to regular stand-ups with squads, product owners, IT-area leads, and chapter leads, the tribe lead also regularly visits the squads to ask questions—not the traditional questions like “Why isn’t this getting done?” but, rather, “Help me better understand the problems you’re encountering,” “Help me see what you’re learning,” and “What can I do to better support you and the team?” This kind of coaching behavior does not come easily to some leaders and managers. It takes real effort, with coaching, mentoring, and modeling (mentoring is being piloted within the Omnichannel Tribe, with plans for expansion) to change behavior from the traditional command-and-control to leaders-as-coaches where everyone’s job is to (1) do the work, (2) improve the work, and (3) develop the people. The third objective—develop the people—is especially important in a technology domain, where automation is disrupting many technology jobs. For people to bring their best to the work that may, in fact, eliminate their current job, they need complete faith that their leaders value them—not just for their present work but for their ability to improve and innovate in their work. The work itself will constantly change; the organization that leads is the one with the people with consistent behavior to rapidly learn and adapt.

"There was a tough deadline and lots of pressure. Our tribe lead, Jannes, went to the squads and said, 'If the quality isn’t there, don’t release. I’ll cover your back.' So, we felt we owned quality. That helped us to do the right things."

“As a leader, you have to look at your own behaviors before you ask others to change,” says Jannes.

“Senior management is very happy with us,” he adds with a broad smile, obviously proud of the people in his tribes. “We give them speed with quality. Sometimes, we may take a little longer than some of the others to reach green, but once we achieve it, we tend to stay green, when a lot of the others go back to red.”

We are often asked by enterprise leaders: How do we change our culture?

We believe the better questions to ask are: How do we learn how to learn? How do I learn? How can I make it safe for others to learn? How can I learn from and with them? How do we, together, establish new behaviors and new ways of thinking that build new habits, that cultivate our new culture? And where do we start?

You can’t “implement” culture change. Implementation thinking (attempting to mimic another company’s specific behavior and practices) is, by its very nature, counter to the essence of generative culture. [emphasis mine]

As you begin your own path to creating a learning organization, it’s important to adopt and maintain the right mindset. Below are some suggestions we offer, based on our own experiences in helping enterprises evolve toward a high-performing, generative culture:

- Develop and maintain the right mindset.

- Make it your own: 1) Don't just copy. 2) Don't contract your change out to a large consulting firm. Your teams will feel that these methodologies are being done to them. 3) Develop your own coaches. Initially you may need to hire outside coaching....

- You, too, need to change your way of work. A generative culture starts with demonstrating new behaviors, not delegating them. [emphasis mine]

Comment for me? Send an email. I might even update the post!