Memstate: The Practical Argument for Big, In-Memory Data

2019-06-28 17:45

Today I listened to a fascinating episode of Jamie Taylor's The .NET Core Podcast, featuring an interview with developer Robert Friberg. Do listen to it.

Friberg and his team have been working on Memstate, an in-memory database. When I first started listening, I thought "this sounds like one of those interesting, technical edge-cases, but I'm not sure I see the point." By the end, I not only understood, I wanted to start using it! But since I don't know how soon that will happen, here's the compelling meat.

Relational databases solve a problem that doesn't exist anymore. That problem is limited memory relative to dataset size.

Your OLTP data can probably all fit in RAM.

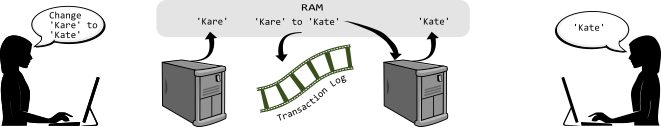

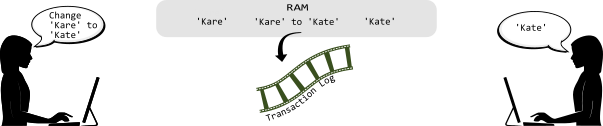

Here, simplistically, is how Memstate looks compared to a relational database.

In a relational database, the data is: Read into Memory > Changed in Memory > Saved to Transaction Log > Saved to Storage > (Potentially) Reread into Memory

In a memory image, the data is: Already in Memory > Changed in Memory > Change is saved to Transaction log

The idea of keeping all needed data in RAM has been around for awhile. Friberg recommends reading Martin Fowler's description of the pattern, and so do I. This tidbit helped me grasp how common the concept is:

The key element to a memory image is using event sourcing.... A familiar example of a system that uses event sourcing is a version control system. Every change is captured as a commit, and you can rebuild the current state of the code base by replaying the commits into an empty directory.

I think this is fantastically straightforward. Why persist state on every write? Why not just persist the change, and keep the state in memory? In the past, the answer was "We can't fit all the data into memory, so you have to read it each time." But as Friberg points out, you can configure an Azure virtual machine instance with 1 terabyte of RAM!

Friberg is clear that this method, like any, shouldn't be applied to all use cases. He nicely points out that even if you kept, for (his) example, all of IKEA's inventory levels for all its warehouses in memory, you wouldn't keep all the product descriptions and images. Consider what we already do today with blob assets:

- We don't (typically) store images in version control. We reference it externally.

- We keep images in cache because it's static.

(And, of course, it also doesn't mean you couldn't keep that data in memory.)

Imagine if your order entry system data was running fully in memory. Now, ask--not rhetorically--could it? The answer will very likely be yes.

What happens if you need to perform maintenance? Surely it would take forever to replay all those millions of commands (transactions).

Friberg gives an example where a very large dataset could be loaded back into memory in about ten minutes.

Finally, while most of the podcast discussed big data, I wonder about small data. One of the persistence stores for Memstate is--you guessed it--a file. I think this would be a great solution for any app that needs a small database. The state would load fast, the app would run fast, and there wouldn't be any of the overhead of using a database engine.1

Want to go a step further? If the store is a file, and if the amount of data isn't huge, and if absolutle top performance isn't necessary, then I'll bet the transaction information could be stored as plain text. And this means your data and history are future-proof.

And that's how I like my data.